Tuesday’s (July 15) Nightly Business Report surprised me with an – in my view – very important announcement: Apple and IBM are going to cooperate to offer enterprise solutions. See more about this significant step here and in many other headline news coming out this week.

The former rivals are joining forces to combine Apple’s user-friendly hardware with IBM’s enterprise experience and software expertise to offer corporate users the best of both worlds. My many years as alliance manager for National Semi, VLSI Technology and Synopsys showed me that well-managed alliances offer many benefits to the partners and their customers.

This Apple and IBM announcement amplifies the main message I heard at SEMICON West 2014 last week. Karen Savala, President SEMI Americas, opened the conference by emphasizing the increasing importance of industry-wide coordination and cooperation. Many sessions followed the same theme, shared ideas between companies and highlighted the economic- and technical benefits of cooperation and partnerships.

The market / product focus of this year’s SEMICON West was clearly on IoT (Internet of Things). The declining prices and stalling volumes of smartphones clearly hurt our profits. That’s why our dynamic industry targets IoT as the next big revenue driver for semiconductors. While in many people’s mind “IoT” is only a buzz word today, a closer look at IoT reveals interesting opportunities for the semiconductor industry.

After this lengthy introduction, let me focus on 3D-IC topics, to qualify for distribution of this article to the 3D-InCites readers. SEMICON West confirmed what I suspected in a recent 3D-InCites blog. The many – some expert forecast up to 50B by 2020 – internet peripheral devices are very likely to become the killer application for, initially 2.5D designs and eventually 3D-ICs. They promise to integrate logic, memory, analog, RF, of course MEMS/sensors and even actuators in a small space and at low cost. In addition to combining heterogeneous functions, low power dissipation, highly secure data transmission and copy-protection for the differentiating software in these devices is needed.

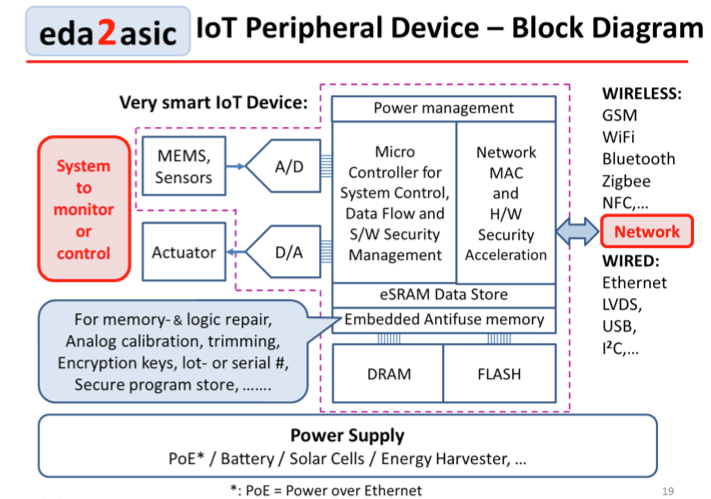

This block diagram above shows that IoT devices are composed of modules:

- Environmental parameters capture and translation into electrical signals,

- local data processing and storing

- encrypting and transmitting of this data to a local host

- the energy source for all of this.

If the IoT device not only captures information but also controls the equipment it’s attached to, bi-directional data flow need to be possible to drive actuators.

The high volumes, technical complexity of, and cost constraints for these heterogeneous modules – as well as the need for high packing density to minimize waste of power by charging and discharging long interconnects – are compelling reasons for using 2.5D or 3D technology for implementing these modules. Because every one of these modules comprises one or few individual die, the need for die-level IP becomes obvious.

Let me take this train of thought another step and voice my view of the good old known good die (KGD). KGD is an oxymoron, because we all know that exhaustive testing at the die-level is not possible today and unlikely to be solved in the foreseeable future. The current discussions about “probably good die” should scare every P&L manager, because “probably making profit” is frowned at by top management and Wall Street.

At VLSI Technology, in the 1990s, I worked with several computer manufacturers and always asked them how they managed to have systems with 100s of millions of transistors perform without interruptions for months, even years, at a time. The answer, always delivered with a by a big smile, was: Redundancy and self-repair! Today we have 100s of millions of transistors in a single die – that’s why we call it an SoC – and should consider applying what worked for computer system manufacturers before.

You are probably thinking right now: “Herb, talk is cheap and today’s IC profit margins don’t allow these elaborate redundancy and repair concepts!” I couldn’t agree with you more! That’s why I would like you to consider a compromise and ask for your support to implement it. Here are my thoughts:

Memory, the largest part of an SoC die area, has had redundancy built in for many years, and becomes a known good function during final test. The previously single embedded CPU core is getting replaced by multi- and many core designs and therefore should allow disabling of a defective core without impacting the overall performance significantly. The fairly large transistors in the I/Os are, based on my experience, much less likely to be defective than the much smaller transistors in the IC core. What remains is the control logic, typically less than 10% of the die area. Adding redundancy to this part of a die would allow die-level repair during final test, increase 2.5/3D-IC yields significantly, reduce cost, and accelerate market acceptance of IoT devices. The semiconductor vendors could grow IoT revenues faster and all these IoT devices would allow us, as consumers, the many IoT benefits earlier.

Of course, such a significant change in the way we do business will need industry-wide communication and cooperation – just like SEMI’s Karen Savala suggested. ~ Herb Reiter

logic redundancy is very expensive…computer guys have done it for system level reliability, but you know how much they paid for it? DMR is 2x+, TMR is 3x+. Who wants to pay more for their chips? Not your IoT customers.

T.M., Thanks for your very valid comment!

Yes, I know that system-level redundancy and self-repair, like computers use, represents a BIG overhead and is costly. That’s why I am suggesting to compromise and add redundancy only to the control-logic. It’s a small part of the overall die and the extent of redundancy can vary with the number of die in a 2.5/3D – stack.

The added cost of this redundancy needs to be more than compensated by the cost savings due to higher stack-yields, otherwise it’s not economical…….Herb