Chiplets are the new “It” heterogeneous integration technology. In fact, the big news in Taiwan this week is that Apple is entertaining the idea of using TSMC’s 3D interconnect fabric – which is based on the company’s approach to chiplet integration. According to Taiwan News, other companies considering the technology include AMD, MediaTek, Xilinx, NXP, and Qualcomm.

So what’s the big deal about chiplets? Many believe chiplet integration provides the solution to power, performance, area, and cost (PPAC) for everything from mobile computing and automotive applications, to 5G, high-performance computing, and artificial intelligence.

Aren’t Chiplets Just a New Name for System in Package?

It depends on who you talk to and what their understanding of chiplets is. In EE Times Dan Scanson’s A Short History of Chiplets, he implies that the term “chiplets” is just another respin of the multichip module (MCM), SiP, or even the concept of heterogeneous integration itself. His proof? A Google search tracking the term, chiplet, and its patent applications containing the word, that extend back to 2005. I respectfully disagree with Scanson.

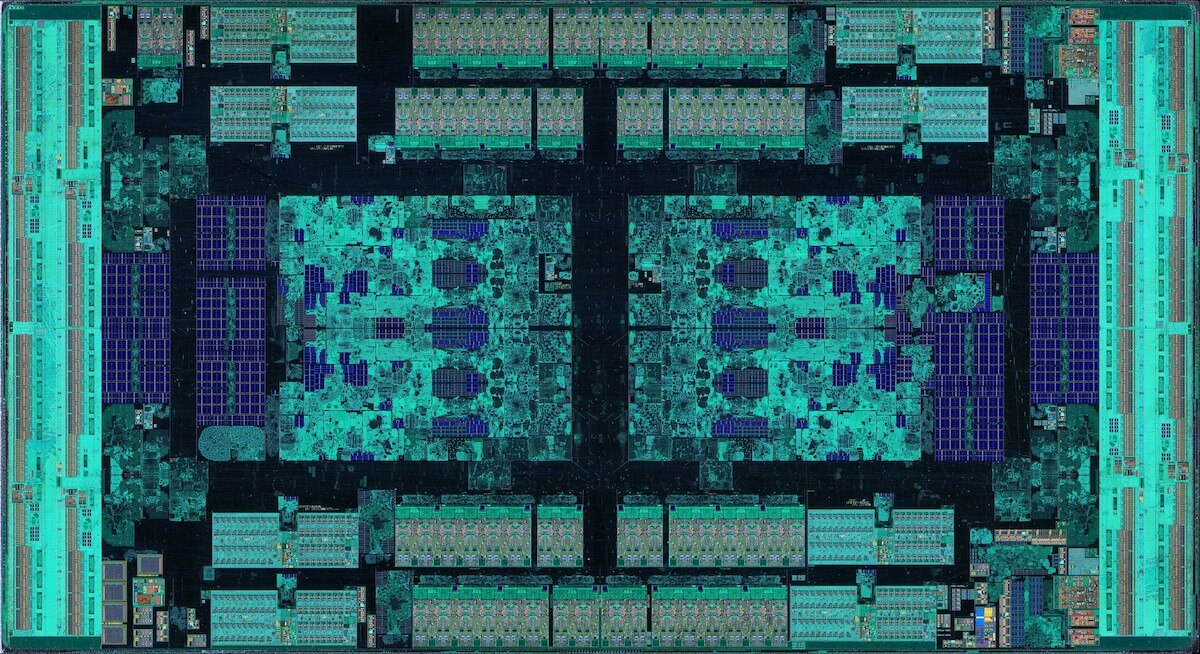

I started hearing about chiplets (aka) dielets on the R&D circuit about 2014-15, when Subu Iyer, then at IBM, started talking about the benefits of disaggregating SoC into hardened IP blocks of different nodes, and reintegrating them on an “interconnect fabric.” The development of this concept is what morphed into the program he now leads at UCLA’s Center for Heterogeneous Integration and Performance Scaling (CHIPS). Similar programs began around the same time at DARPA and CEA Leti.

Ultimately, chiplets and SiPs are not the same. While chiplets may be classified as another form of SiP, not all SiPs are chiplets. The difference is in the design.

What Differentiates Chiplet Design from Package Co-Design?

In a recent podcast interview, I spoke with Kevin Rinebold of Siemens EDA, and Robin Davis of Deca to explore how successful chiplet integration begins with a collaborative design flow. We started out by defining what we mean by chiplets, from a design perspective. Rinebold explained that the difference between co-package design — which is used for a ball-grid array (BGA) type packages, for example — and chiplet design, is that it involved die that are specifically designed to work with other chiplets integrated at the package level.

“A lot of it has to do with how the die are communicating with each other,” he explained. “There are various interfaces, such as Intel’s advanced interface bus (AIB) to facilitate this communication. That’s really what we see as one of the bigger differentiators for chiplets.”

“Chiplet integration requires more design work to make those two chips work together because they weren’t (originally) designed to be in the same package,” noted Davis.

According to Rinebold, one simple example of what needs to be considered when designing a chiplet packages is driver strength. “The driver strength for a device designed in a chiplet doesn’t need to be nearly as powerful as if it was being implemented in an ASIC.” He explained. “In an ASIC, it would have to be strong enough to drive a signal through the die, through the package, off onto a printed circuit board and into the receiver on the other end of the connection. Whereas on a chiplet, these things are within a couple of millimeters of one another, so driver strength doesn’t have to be as strong, and results in a much lower power device.”

Can Chiplets Implement SiP, Fan-out, 2.5D, and 3DIC Architectures?

Rhinebold says yes, but the limiting factor is bump density, which is influenced by the communication interface. Bump density will determine substrate options – silicon interposer or organic substrate, and whether an interconnect bridge is needed. Davis says while interposers are currently the de facto standard for chiplet designs, Deca Technologies is exploring finer pitches with fan-out.

Is cost a limiting factor to chiplet adoption, like 3D ICs with through-silicon vias was? Quite the opposite explained Rinebold. According to a paper presented by AMD at ISSCC 2021, based on AMD internal yield modeling chiplet packages, offer a 41% cost savings over monolithic implementation with only a 10% increase in overall area.

Chiplet Design Challenges

From a hardware perspective, Davis explained smaller bump pitches can lead to die pitch congestion. If you’re going the fan-out route vs. interposer, this means adding multiple redistribution layers (RDL) that require an additional copper pad, which leads to die shift issues. While TSMC solves this issue with an entire layer just for extra pads, Deca’s approach is its Adaptive Patterning.

From a software perspective, the big challenge is the disruption to the overall ecosystem with regard to design tools. Who owns the design process? Is it the IC designer or the package designer? That depends on whether the die being integrated are sourced from the same foundry or multiple foundries. There are different methodologies currently being implemented with none being the clear winner.

This is where things can get tricky. Luckily, Davis and Rinebold go into a lot more detail on this topic in the podcast episode. You can listen to it here.