If we look back, almost a decade ago, 3D stacked memory was all the rage, and the leader in stacked memory development appeared to be Micron and their highly advertised hybrid memory cube (HMC) which was announced at the 2011 Hot Chips Conference. HMC featured a low-width bus & extremely high data rates to offer memory bandwidth that by far exceeded that of then standard DDR3.

The 3D Stacked Memory Race

The first processor to use Micron HMCs was the Fujitsu SPARC64 , which was used in the Fujitsu PRIMEHPC FX100 supercomputer introduced in 2015. There were also announcements in 2015 that Intel was going to use the HMC in Knights Landing modules for high-performance computing.

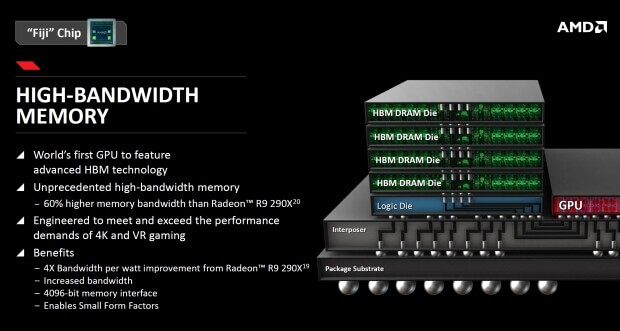

Although Micron and HMC had a lead time of several years, AMD and partner SK Hynix were able to deliver a commercially accepted solution several years ahead of Micron. As Hynix launched the first generation of HBM and announced the production of HBM2, Samsung, gave up waiting for HMC and switched to HBM production also.

The HBM Technology Roadmap

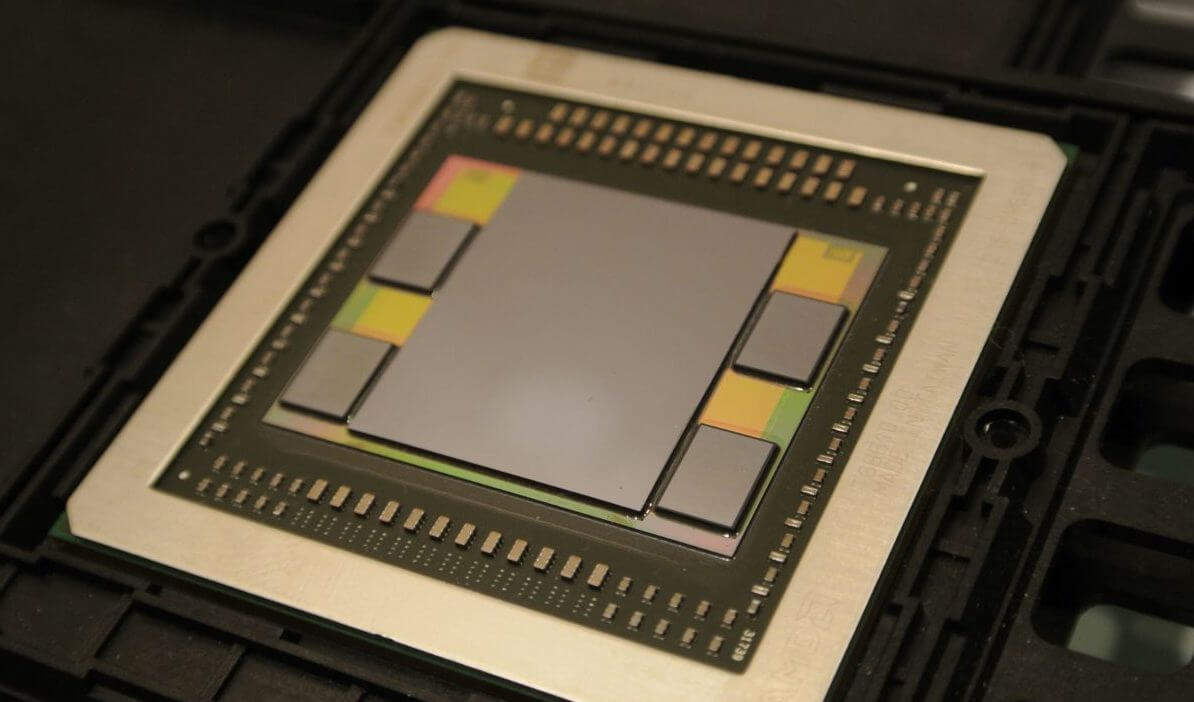

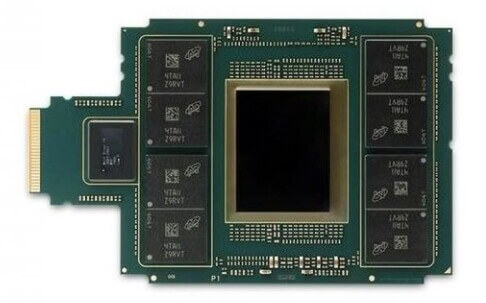

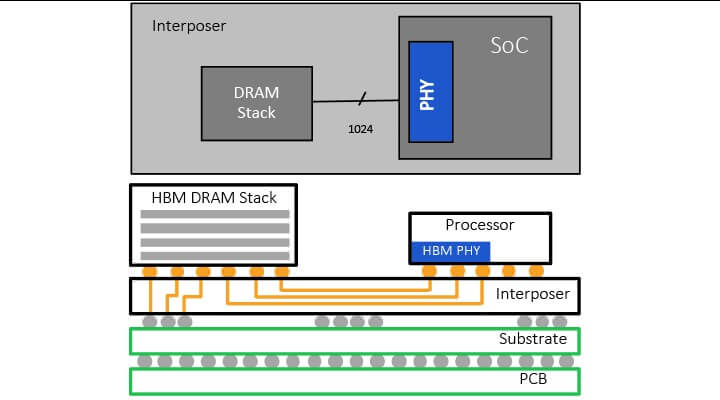

HBM reduces the overall footprint memory devices by stacking memory cells onto the control logic die thus minimizing PCB real estate. Its wide bus structure reduces power consumption and delivers increased bandwidth.

By 2017 it was clear to all in the know that HBM was the clear market winner, and that Micron was not moving forward with their memory cube concept. The HMC consortium quietly faded away.

By the summer of 2018 Micron officially announced that their memory roadmap was changing. In 2018, Micron announced the end of HMC and Intel cut off roadmaps with Xeon Phi, making it look like the end of this product line.

In the last 5 years, makers of GPUs and network processors have turned to HBM to meet their bandwidth needs. While HBM doesn’t yet generate high volume sales for Samsung or Hynix, it commands a price premium, which helps improve their profit margins.

Micron Makes the Switch

In 2019 Micron announced on their web page that “Micron has established an HBM development program”.

Micron, in its latest earnings report, announced that later this year the company will finally introduce its first HBM2 DRAM, so we expect that by 2021 all three global memory manufacturers will be in the HBM market. Stacked HBM2 memory is expensive, but it is hoped that this additional competition will lead to reduced prices.

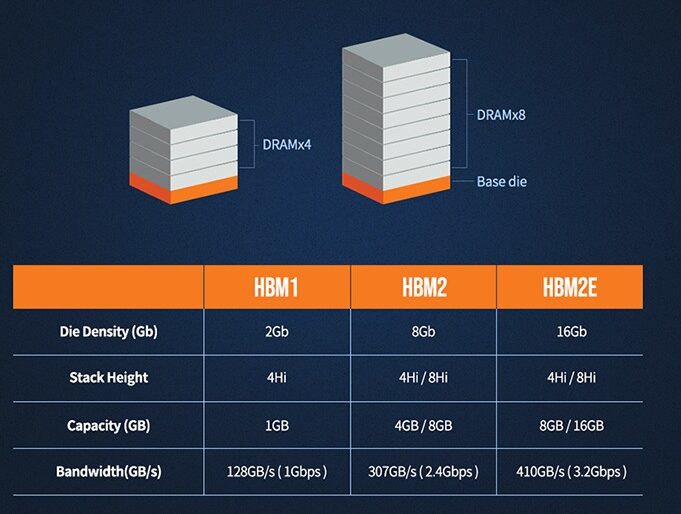

As another sign that HBM2 use is becoming more widespread, Rambus has announced a new High Bandwidth Memory 2E (HBM2E) controller and physical layer (PHY) IP solutions [link] to enable customers to integrate the HBM2E memory into their products. Rambus’ controller and PHY design specifications meet JEDEC HBM2E standards.

The Rambus 3D stacked memory design supports 12-high DRAM stacks of up to 24 Gb devices or 36 GB of memory per 3D stack. This single 3D stack is capable of delivering 3.2 Gbps over a 1024-bit wide interface, delivering 410 GB/s of bandwidth per stack. The HBM2E controller core is DFI 3.1 and support logic interfaces like AXI and OCP.

For all the latest in advanced packaging stay linked to IFTLE…………………………………