Micron’s recent announcement that they will be shipping samples of the Hybrid Memory Cube (HMC) and ramping to production in 2014 has brought about another flurry of activity in 3D DRAM discussions. I’ve had more than one person ask me about the differences between HMC and High Bandwidth Memory (HBM). After attending last week’s IEEE 3D IC Symposium, I was curious about the differences between Tezzaron’s DiRAM, HMC and HBM. Add to that the much-discussed but little-understood monolithic 3D DRAM approach being promoted by MonolithIC 3D, and you have a recipe for confusion.

Since I was surrounded by 3D technology experts, I asked them for help unravelling some of the more confusing bits. Paul Franzon, University of North Carolina, outlined the differences between HMC and HBM for me, and David Chapman, Tezzaron, explained the motivation behind DiRAM and the differences between it an HMB and HMC. Zvi Or-Bach of MonolithIC 3D provided insight into his company’s approach. Hopefully this will answer some of your questions as well, but if it doesn’t, feel free to pose yours in the comment area and I will do my best to get the experts to answer!

Not All 3D RAMs are Created Equal

Let’s start by looking at the different motivations behind these devices. DRAM is one of several segments of volatile, or Random Access Memory (RAM) that also includes SRAM. Chapman explained that over the past 20 years, regardless of the memory type, latency has remained almost constant, so manufacturers needed to find another way to improve memory performance. Increasing bandwidth has been the primary approach SRAM and DRAM architects have used to improved RAM performance. To keep costs as low as possible in compute market applications, DRAM designers have been trading off random accessibility to improve bandwidth. Chapman said that while this is fine for personal computing and mobile consumer devices, it is a big issue for some kinds of work such as networking equipment, super computers, and such specialized compute operations as image processing. “Different needs call for different volatile memory,” noted Chapman.

Historically, DRAM captured 80% of the volatile memory market. The other 20% was divided between slow SRAM (16%) and fast SRAM (4%). Over the past 20 years fast SRAM and DRAM designers both increased bandwidth. Meanwhile slow SRAM has all but disappeared from the market because nobody built a high bandwidth slow SRAM, leaving a vacuum that needs to be filled. One solution was high bandwidth devices designed using DRAM bit-cells to deliver higher random access transaction rates at moderate latency, explained Chapman. Interestingly, he says they have landed in exactly the same price and performance niche formerly occupied by slow SRAMs. Micron’s reduced latency DRAM (RLDRAM) product family is the best known of this class of volatile RAM products. So today, despite latency holding almost constant within each class of memory, and bandwidth continuing to go up for all memory types, the key distinguishing characteristic that separates classes of RAMs is random access transaction rate. Those top few percentage points of the volatile memory market – Chapman calls it “the layer of cream at the top of the RAM market” – continue to draw interest in applications that require random-access optimized memory.

“The ‘layer of cream’ in volatile memory products has no business being in laptop or desktop applications. There is a non-compute market for memory, and networking is the biggest piece of that market,” said Chapman. It’s that layer of cream that Tezzaron is targeting with its “dis-integrated memory” on logic – or DiRAM. While both HMC and HBM claim to target networking and server applications, they both have fundamentally the same characteristics of DRAM and are more suited – in price and performance – to the compute market memory needs. “We (Tezzaron) have performance characteristics that allow us to shoot for the higher end of the market,” said Chapman.

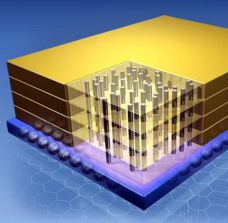

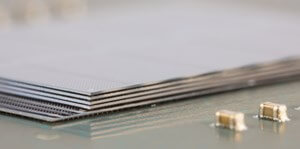

At first glance, (schematic drawings) HMC, HBM and DiRAM look similar. But they are structurally very different:

HMC

Manufactured by Micron Technologies and driven by the HMC Consortium, the HMC is a stack of four DRAM die on a logic (SerDES) chip that contains the interfaces. While internally HMC is considered to be a true 3D IC memory on logic stack, “its stops being 3D at its own package,” explained Franzon. It goes into a conventional package and is integrated that way onto the printed circuit board (PCB), talking across the PCB to the host. This limits its off-chip bandwidth. One advantage of HMC is that it allows Micron to preserve its business model and deliver a packaged memory to a customer, explained Chapman. Micron has begun shipping 2GB samples to customers, and plans to ship 4GB samples in 2014. (For an in-depth look inside the HMC, check out this feature story on Solid State Technology.)

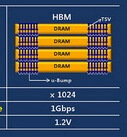

HBM

High Bandwidth Memory (HBM) is a JEDEC standard being developed by JEDEC’s HBM task force. According to Franzon, it is a memory stack only without logic. Unlike HMC, it is designed for 3D IC integration and can integrate on an interposer only, which interfaces with a CMOS I/O interface. Rather than selling a packaged part, manufacturers of HBM will be essentially selling bare die to be mounted on an interposer. While the JEDEC website claims that HBM leverages Wide I/O and TSV technologies, Franzon noted that 8 ports of 128GBs “is not consistent with the Wide I/O specifications.” Target publication for the JEDEC HBM Standard is 2013. Franzon reported high volume manufacturing for HBM is targeted for 2015.

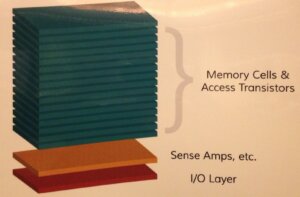

DiRAM

The best way to explain Tezzaron’s dis-integrated memory (DiRAM) is to start with what it is NOT. As Chapman explained it, DiRAM is not stacked memory die. Memory cells are not RAM until they are in the stack. Rather it is constructed of stacked memory cells and access transistors, that is then integrated with a logic die containing sense amplifiers. The I/O layer can be swapped out depending on the needs of the customer. Tezzaron’s approach is unlike any other in the industry. The memory stack is assembled and interconnected using room temperature wafer-to-wafer bonding (Ziptronix DBI) without testing prior to bonding. Thin wafer handling is unnecessary because wafers are permanently bonded and thinned as they go. Through silicon vias (TSVs) are fabricated using via-first approach, and filled with Tungsten rather than copper. Vias are 1µm wide, and therefore DiRAM can fit 10 where HMC can fit 1. This provides an enormous advantage in the amount of interconnects possible and allows for effective built-in self and repair. The wafers are thinned to 5µm rather than 50µm, making them the world’s thinnest wafers, says Chapman. Tezzaron’s first DiRAM customer will take deliveries starting 2Q14. The technology is expected to be commercially available in the first half of 2015.

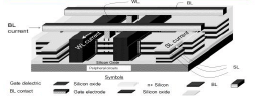

The Monolithic Approach to 3D DRAM

An alternative to creating 3D DRAM structures by stacking memory die and interconnecting them using TSVs in a chip-to-chip configuration, is a build-up approach that stacks transistor layers in an on-chip 3D configurations. This is becoming known as monolithic 3D. Recently introduced by Samsung for its 3D NAND technology and in the works at Toshiba and SanDisk, the monolithic concept is gaining traction in the industry as a viable approach to on-chip 3D integration.

Up until now, monolithic 3D approaches have been primarily under development for non-volatile memory technologies like 3D NAND. But one company, MonolithIC 3D, has targeted 3D DRAM as an application for it’s patented processes, which, according to CEO and Founder, Zvi Or-Bach, is another way to continue Moore’s Law while controlling the costs that are skyrocketing in traditional 2D CMOS scaling. Essentially, he says, leveraging legacy front-end tools, as well as processing multiple layers in parallel following one lithography step to achieve multiple devices layers control costs.

Or-Bach also noted that MonolithIC 3D’s approach is not competitive with 3D TSV, but rather complimentary. “There’s a problem with device-to-device interconnect,” he noted. “On-device interconnect has scaled down, off-chip has not. Using TSVs in close proximity can achieve 100x better throughput vs. conventional off-chip interconnect processes,” Or-Bach says he sees opportunities for stacking monolithic 3D devices using TSVs in the future.

Monolithic’s 3D DRAM offering is monolithically stacked single crystal silicon double-gated floating body DRAM memory. Lithography steps are shared among multiple memory layers to reduce bit cost. Peripheral circuits below the monolithic memory stack provide control functions. Unfortunately, the company’s technology has yet to move beyond “PowerPoint engineering.” Or-Bach reports that they have caught the attention of CEA Leti, which provides another level of credibility, as well as having a paper accepted for IEDM 2013. The next step is to establish relationships with development partners and start building stuff. “I’m eager to work with partners to prove the technologies and take it from PowerPoint to reality,” said Or-Bach.

Hopefully this clears up some of the confusion. Any questions? ~ F.v.T.

Francoise

Another great summary from the “Queen of 3D”

Keep em coming.

Thank you Rick! Always happy to have happy readers. 🙂

Congratulations Francoise you have finally discovered Tezzaron and understood their radical departure from Cu filled TSVs and the perils of thin then bond. Hope the mainstream of 3D by TSV too will follow suit.

Thanks Dev, but I’ve been following and writing about Tezzaron for years. 🙂 However, I don’t expect their technology will ever hit mainstream consumer applications, because it’s not a low cost solution. While it does offer a cost advantage in the market sector it targets, I believe HMC and HBM will provide the more mainstream solutions.

I think the Micron folks have delusions of grandeur in the Z-direction. They call this a CUBE? Now a true silicon cube would be something to talk about….

Nick – We can likely give Micon’s marketing team credit for coming up with the name “Hybrid Memory Cube”. Some how Hybrid Memory Stack doesn’t have the same ring to it. However, if what they claim is true about the technology, HMC is likely to propel 3D into mainstream commercialization, which is definitely something to talk about.

Francoise

I would be interested in hearing what cost advantages you see with HBM & HMC in relation to the Tezzaron DiRAM design ?

Hi Rick –

I should clarify – by cost, I meant in reference to the cost points associated to the target markets of the devices. It’s my understanding that Micron is first targeting the high end computing market (servers, data centers, telecommunications) for HMC, but will ultimately take this into any market that expresses interest. On the other hand, Tezzaron isn’t as concerned with the same cost pressures as its target market space is the void left by SRAM in high-end computing.