Thermal management is one of the last vestiges of 3D integration challenges. As such, the European 3D Summit (Jan 23-25, 2017) devoted its entire R&D segment to explore what is in the works to solve this in a session titled Tackling the Thermal Management Challenge. Chaired by Jean Michailos, STMicroelectronics the session featured the following talks:

- Integrated and Self-Adaptive Microfluidic Cooling for Next Generation Microelectronic Packages, Dr. Luc G. Fréchette, Professor of Mechanical Engineering, University of Sherbrooke

- Thermal-Aware Design and Management of 2D/3D Many-Core Servers, Marina Zapater, Ph.D. EPFL

- Thermal Aspects of 3D and 2.5D System Integration, Herman Oprins, Research Engineer, imec

- Functional Electronic Packaging for Dense Server-System Scaling, Thomas Brunschwiler, Research Staff IBM Research – Zurich

While the idea has been somewhat scoffed at over the years (who would put LIQUID in ELECTRONICS, after all), it seems that all research roads are currently pointing to liquid cooling as the ideal approach to dealing with hotspots. Ironically, it’s turning out that 3D stacking processes themselves are enabling these cooling approaches to solve the heat and power issues in 3D stacks.

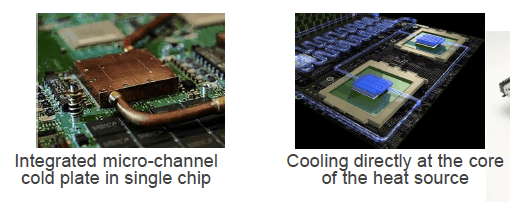

To start off the session, Fréchette described how a silicon cold plate consisting of microfluidic channels can provide a solution for managing hot spots advanced packages. Using the cold plate eliminates the need for a heat spreader, and channels can be located near the chip. There is no thermal interface material resistance. It’s a compact solution. Heat rejection is delocalized. However, it needs a liquid pump to work. Cooling is managed near the hot spots by dedicated µchannels.

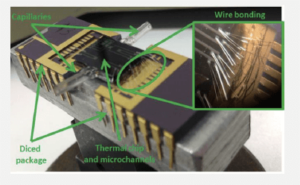

This approach has been demonstrated using test chip stacked on top of the cold plate that had both electrical and µfluidic interconnects. The µfluidic I/Os consisted of capillary tubes glued to the die stack. Wire bonds formed the electrical interconnects. Results of the experiment showed that µchannels are relevant, and back side stacking shows better performance.

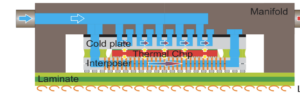

Fréchette’s team at the University of Sherbrook is participating in a European funded project to develop intelligent thermal management for 3D packaging and system-in-package (SiP) as well as applications for them. The approach used combines adaptive microfluidics with thermoelectric temperature mapping and energy harvesting into an interposer or 3D stack.

Key takeaways from Frechette’s presentation: Microfluidics are promising for addressing hot spots in mobile devices, not only for high-performance computing applications. They can cool hot spots directly, and are useful for thinned die and 3D stacks.

Zapater presented her team’s work using thermally aware design and predictive modeling to solve cooling issues in data centers that had implemented 3D multiprocessor system-on-chip (3DSoC) to meet the increasing computational and memory bandwidth needs in large scale computing systems, such as data centers.

While 3D MPSoCs solved memory bandwidth issues, power density increases, and subsequently so do thermal issues, such as localized hotspots, that could not be addressed through air cooling of the data center itself. Using model-based predictive control tools, they have developed a thermally aware design that integrates liquid cooling, and FDSOI that will cool an entire MP SoC while powering up memory modules.

Rather than discuss innovative cooling solutions, Oprins presented findings from test vehicle data on thermal aspects of 3D integration scenarios, comparing 3D stacking vs interposer integration. The stackable test chip contained integrated heaters and sensors that allowed application of a user-defined power map in both tiers and scanning the temperature of the full chip surface. The interposer configuration showed superior thermal performance in steady state and transient regime compared to the 3D stacked package, at the cost of larger package footprint.

Oprins also demonstrated that hybrid wafer-to-wafer bonding shows promise for reducing inter-tier thermal resistance by reducing the stand-off and using an inorganic material. There was a 4x improvement in comparison with 40µm pitch µbump interface required for die-to-die stacking.

To wrap up the session, Brunschwiller brought the conversation back to liquid cooling as a way to integrate thermal management into electronic packages destined for high-density server systems. He talked about data center evolution over the years, and how computational needs resulted in increased density.

Hot water cooling that reused heat was first used in 2012 at the SuperMUCat Leibniz Data Center, then the world’s most powerful and efficient supercomputer. He walked us through the evolution of backside cooling, including lid-attached, direct attached, and embedded silicon cold plates with microchannels structures. He shared several concepts for disruptive cooling approaches. One, a dual side cooling is a convective interposer design that integrates a cold plate with microchannels. 3D configurations also allow for volumetric heat removal that relies on interlayer cooling.

While all these approaches to thermal management are still in R&D, they represent some significant efforts being made to address thermal issues that come along with increasing density of today’s high-performance computing needs. ~ FvT