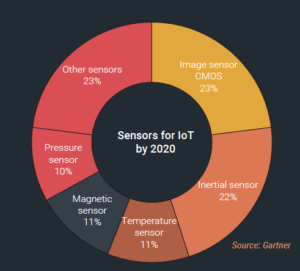

There’s no doubt about it. Imaging sensor technologies have come a long way since the introduction of the digital camera. In fact, according to Gartner, image-based sensors will be the single largest category of devices for the internet of things (IoT) sensing (figure 1). That fact was made imminently clear a few weeks ago at the European Imaging and Sensors Summit, which took place September 21-22 in Grenoble, France, co-located with the MEMs and Sensors Summit. While I spent the bulk of my time at the MEMS and Sensors Summit, I did catch the Start-Up Pitches Session, and not one single presentation was about new image sensor technologies for smartphones. Instead, we heard about universal minilabs (Vincent Poher, Avalun); sensors and devices for IoT applications (Palle Dinesen,UbiqiSense), graphene photodetectors for machine vision, night vision, spectroscopy and more (Tapan Ryhänan, Emberion) and biomedical and scientific applications (Renato Turchetta, IMASENIC).

From iPad to LabPad®

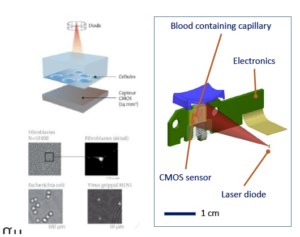

Developed by Avalun, a spin-out of Leti, and introduced in 2016, the LabPad is a portable and Bluetooth connected mini-lab that allows the user to take biological measurements on the same device using a range of dedicated micro-cuvettes. Its first application was measuring blood coagulation time so that people on Warfarin, a blood thinner, could perform self-tests, rather than going to the lab for frequent testing, explained Vincent Poher. The biological reaction takes place inside a specific microfluidic micro-cuvette in front of a CMOS image sensor. The LabPad can also be used for blood typing and virus detection.

According to Poher, this miniature microscope has the same analytical capabilities as a standard transmission optical microscope. With it, you can compare images, detect motion, coagulation time, and data-mine time it takes for red blood cells to stop moving. The LabPad is the only device on the market that can do this all on the same reader, Poher said. What makes this lensless imaging technology a stroke of genius is that it’s built using off-the-shelf using CMOS components originally developed for smartphones (Figure 2).

The LabPad is centerpiece of AVALUN’s “My Handy Lab” concept that also includes Tsmart® microcuvettes, which measure biological parameters from a small drop of capillary blood, and TSmart strips, for single-use rapid tests that allow quantitative and qualitative assays on a small drop of blood. The LabPad outputs data to the avaConnect, Avalun’s app for formatting, securing and transmitting the biological results to the right healthcare professional.

Intelligent Low-cost sensing for the IoT

Probably one of the best explanations if heard to describe the IoT came from Palle Dinesen’s presentation, where he quoted Business Insider to set the stage for what UbiqiSense is all about:

“Contrary to how digital consultants tend to explain it, the internet of things is not a smart refrigerator having a chat with a smart toaster, but instead a collection of hundreds and hundreds of sensors picking up information, forming a system that grabs all the possible data it can, and then offers it to people, or in exchange for money, for all kinds of services.”

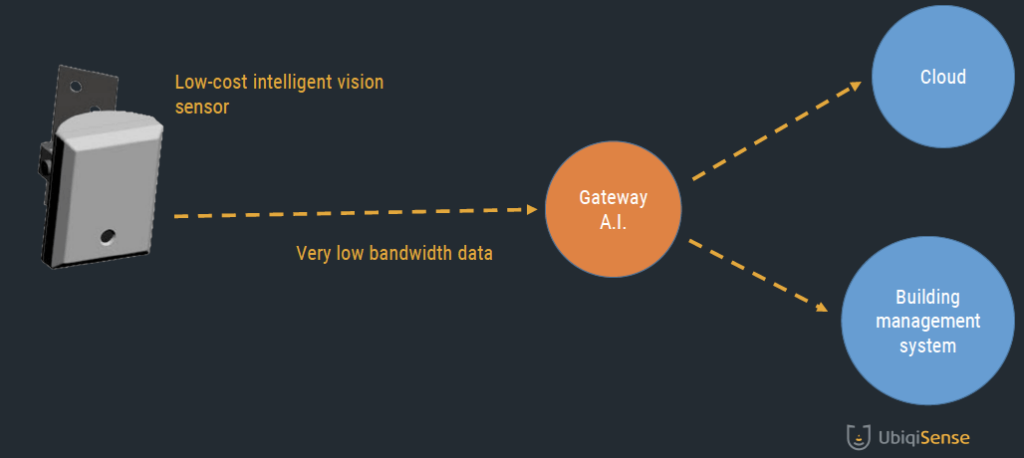

UbiqiSense aims to provide low-cost, scalable IoT sensing solutions that integrate image sensors with artificial intelligence and machine learning to create context-aware computer vision technology for smart buildings, homes, and cities.

Leveraging mobile hardware commoditization seems to be a trend among these start-ups. Like with Avalun’s LabPad, the building blocks of UbiqiSense systems include low-cost, low-to-medium resolution smartphone camera modules and low-cost embedded systems with efficient processing architecture. The theory being that these image sensings don’t need high image quality, and the hardware is ripe for intelligent sensing applications.

Of the five key IoT architecture elements, which Dinesen said includes sensors, data collection and storage, network, analytics, and control, UbiqiSense’s vision sensing technology covers the first three and a half. Which is to say that they offer a cost-efficient camera and vision processing sensor that delivers very low bandwidth data to a proprietary artificially intelligent gateway that in turn sends it to the cloud or facility servers for analytics (Figure 3). Dinesen presented a use-case scenario in which this image data can be used for energy conservation, facility management, and space management.

Graphene Photodetectors

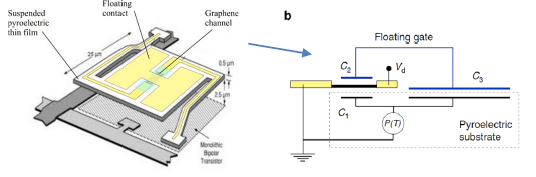

The third start-up pitch of the session, presented by Emberion’s Tapan Ryhänan, focused once again on leveraging low-cost, standard CMOS, this time by integrating it with graphene and nanomaterials to create high-performance, low-noise graphene sensors for automotive, night vision, surveillance, thermal and hyperspectral imaging, machine vision and spectroscopy applications.

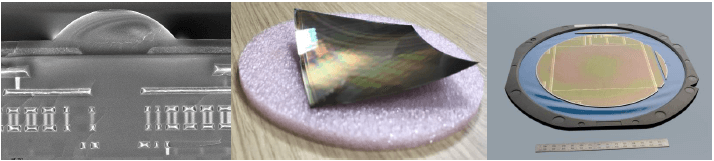

Why graphene? According to Ryhänan, graphene — a single, tightly packed layer of carbon atoms bonded together in a hexagonal honeycomb lattice — can be a better analog amplifier than silicon field effect transistors (Si FETs). Because graphene provides a built-in amplifier for every pixel, it integrates easier. No load resistor means there is no need for chopping, and the device can run in DC-mode. As a result, graphene photodetectors enable an ultra-sensitive thermal and hyperspectral imaging solution that is scalable to large sensors arrays. Emperion has used this technology to create a versatile platform to build various sensors with the same measurement principle, making it possible to integrate the same graphene technology on flexible and large-area substrates (Figure 4).

DC mode. (Courtesy: Emberion)

Customizing CMOS Image Sensors for Biomedical Applications

IMASENIC, a six-month young start-up based in Barcelona, Spain, derived its name from three words: IMAge SENsor IC, which makes sense (no pun intended) because they focus on developing “advanced, custom CMOS image sensors from pixel design to the digital interface,” according to CEO Renato Torchetta (Figure 5). Again, the theme here was leveraging industry know-how learned at Broadcom and Scientific Technologies Facilities Council in the UK to bring together R&D and commercial experience to launch a start-up focused on developing advanced CMOS image sensors and companion ICs for bio-medical, scientific, space, and high-speed imaging applications. After hearing the laundry list of the company’s capabilities, from common features like global and rolling shutter, high dynamic range, and backside illumination, to more niche features like single photon avalanche detectors, and IR, UV, and X-ray detection, it’s clear the differentiator of this company is how it is leveraging these capabilities to design customized CMOS image sensors for new application spaces. The presentation was marketing-heavy and technology detail-light. The clear message of this pitch was “we’re open for business and we are hiring.”

While this was just a sample of many presentations throughout the two days, from what I observed and heard during this session, it’s clear that in addition to what Frederic Breussin, Yole Développement reported about the pixel race being over, and the focus turning to performance in image sensing, I’d also say that leveraging mature technologies in new applications beyond making pretty pictures is what we will continue to see driving the image sensor space for some time to come. ~ F.v.T.