At ECTC 2016, which took place at the Cosmopolitan Las Vegas, Las Vegas, May 31-June 1, 2016, the special session entitled, Memory Technology Advances and Prospects for Packaging may have been one of the “most important starts to ECTC ever.” At least that was TechSearch’s, Jan Vardaman’s impression, and I can’t disagree, as it aptly set the tone for the week. Kudos to the session chair, Nanju Na, Xilinx, for lining up key industry experts from companies that have paved the way for 3D memory stacks and 2.5D integration: namely Bryan Black, AMD; Sandeep Bharathi, Xilinx; Nick Kim, SK hynix; Ravi Mahajan, Intel; and Craig Hampel, Rambus. Each presented updates on their company’s approaches to solving the memory bandwidth challenge through advanced packaging. Up first, Kim discussed Hynix memory package roadmap, noting that the company is leading new and advanced memory package development against the diverse and rapidly changing circumstances of the semiconductor industry. He offered some key takeaways:

Up first, Kim discussed Sk hynix’ memory package roadmap, noting that the company is leading new and advanced memory package development against the diverse and rapidly changing circumstances of the semiconductor industry. He offered some key takeaways:

- Flip chip, wafer-level chip scale packaging (WLCSP), and stacking using through silicon vias (TSVs) are promising technologies that satisfy requirements for faster speed, wider bandwidth, and smaller, thinner packages.

- TSVs are in mass production for DRAM.

- 3D system-in-package (SiP) is suitable for placing memory next to logic, such as has been done on the Fiji processor introduced last year by AMD. sk Hynix provided the high bandwidth memory cube (HBM) for that product.

- Fan-out wafer level (FOWLP) is a viable option for packaging memory, but cost reduction is needed. He specifically identified TSMC’s integrated fan-out (InFO) and Amkor’s silicon wafer integrated fan-out (SWIFT) as most promising options.

- New process technologies being used for high-reliability and low-cost packaging include stealth dicing, and gold-free wire in wire bonding, such as aluminum or palladium Cu wire.

Mahajan talked about the trends of memory bandwidth, and that much like Moore’s Law, it doubles every 2.5 years. This has been consistent for over 15 years, he said. “Packaging needs to figure out how to handle this.” One way is to bring the memory closer to the CPU to improve power efficiency and performance. Speed and data trends call for wide and slow busses to lower power needs. He said a wide bus and on-package interconnect create power efficient interconnects between the CPU and memory.

Hempel veered in a different direction to talk about packaging memory from an architecture perspective. He queried when it comes to architecture and package design, which comes first? He explained how TSVs and 3D affect system architecture, noting that the three aspects that drive include capacity, power, and performance.

According to Hempel, using TSVs allows for a “master and slave” approach through the stack to improve density and capacity, which is critical for high-capacity data centers. He described the different ways to add capacity through 3D stacked DRAM:

- Wide I/O uses the reduced length and parasitics of TSVs to reduce power.

- HBM provides “near memory” for very high density interconnects on a simple logic layer.

- The hybrid memory cube (HMC) differs architecturally. It provides advanced features in the logic layers including a high-speed link that allows for placement further than the CPU. It’s these differences in the functional logic layers that make HBM and HMC notably different.

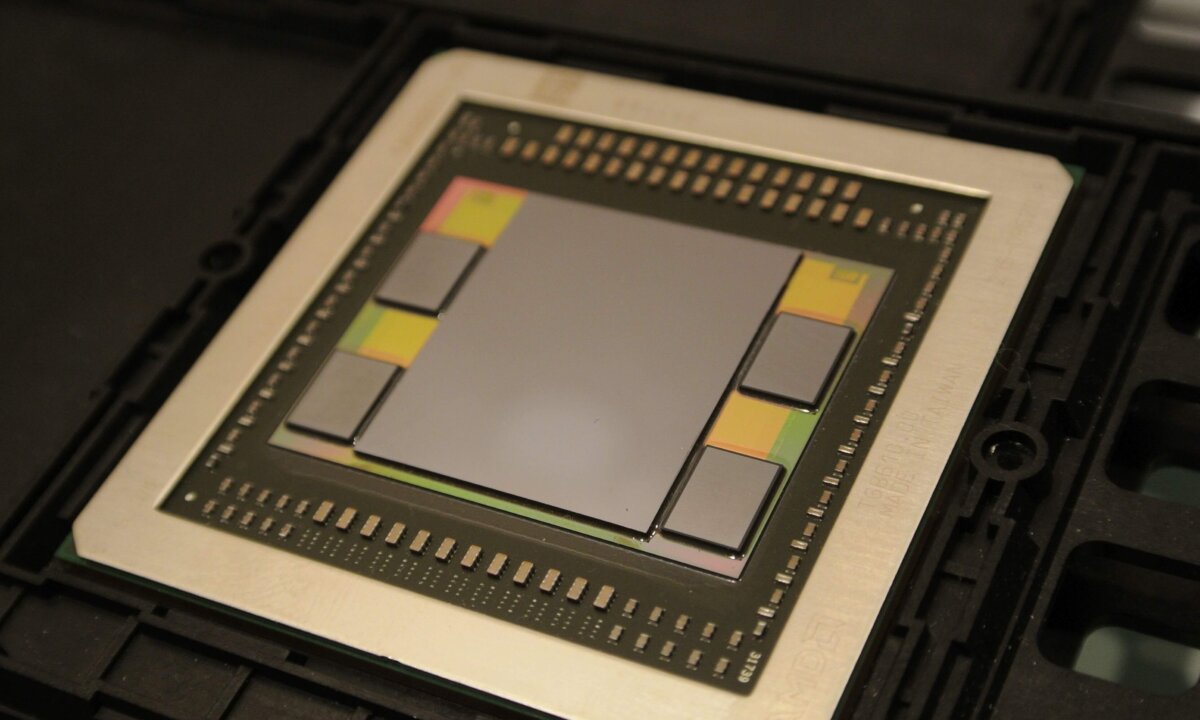

For his part, Bryan Black, AMD mostly reprised his presentation from SEMICON West 2015, The Road to the AMD Fiji GPU, (and admitted as much in the beginning of his talk). I wrote about that here, but as is always the case with Black, he never REALLY gives the same talk twice. He made some especially profound statements in this version; as a silicon guy giving a nod to packaging: “15 years ago, I considered myself to be a “cowboy architect”, and didn’t have any interest in packaging. But then 15 minutes of learning about TSVs changed everything forever. Since then I’ve been fully focused on die stacking as an extension of Moore’s Law.” Black went on to say that he left Intel because it was clear “they were never, ever going to do die stacking.” That, of course, has changed, as evidenced by the work Mahajan is doing now.

Black also noted that by using HBM, they were able to deliver an enormous amount of bandwidth, only 60% of which is used by Fiji. This indicated to him that with HBM, we have rolled back the clock and have many years of performance scaling ahead of is before we run out of gas. In fact, Fiji is just the beginning. It’s all about co-design, he said. They are now working on a cost reduction and 3D usage model and are figuring out how to stack directly on top of the logic device.

The final speaker was Xilinx’ Bharathi, who talked about the company’s most recent first: 3D on 3D. Remember that Xilinx was the first to implement 2.5D interposer integration for FPGAs in its Virtex family (and won a 3D InCites award in the process). Now they have built a platform, christened UltraScale, which combines FinFET technologies, 3D IC structures, new memory, and multi-processing SoC (MPSoC) technologies to bring a higher level of integration into FPGAs. “FPGA is a system enabler,” explained Bharathi,“Memory technologies are evolving, and we need to support more SerDes bandwidth and compute bandwidth.” He added that packaging is a critical area to Xilinx. If you address packaging across the supply chain, you can reduce cost. The overall package spectrum requires industry support.

Jan Vardaman asked the question in many of our minds. Noting that while it’s clear TSVs have been widely adopted for memory in high-performance computing, could the panelists say when TSVs would be adopted into the top package in package-on-package (PoP) configurations, essentially bringing the large volumes the industry needs to support this technology. The answers were varied, but all tied to cost reductions.

Kim said currently, wire bonding meets the top package requirement in PoP, and so SK hynix doesn’t plan to change that “anytime soon.” Black was confident that the cost will come down. He reminded us that in 2011, the ASP for a Xilinx FPGA package was $17K. Thanks to AMD’s work, the cost of that package is now below $700. “The cost will keep going down. For a cell phone to adopt it, it has to be a commodity,” said Black. “We are a number of years away from it, but it will happen.”

Hempel concurred, calling it “trickle down technology.” What’s missing, he said is the business model of cooperation. Unlike the DRAM industry, integration is missing in mobile. As the economic benefit of TSV based DRAM is realized, more vendors will be able to negotiate that.

The key takeaway from this session was the fact that finally, and into the foreseeable future, advanced packaging technologies, and not scaling, are critical to reaching the capacity, power, and performance of today’s devices.

Traditionally the acceptance by users ( Volume ) of a new and higher performance technology has been in inverse proportion to its higher Cost. Not surprising then that the sweet spot in terms of mass adoption for distinct applications has happened somewhere in the middle of that spectrum relating Incremental Cost to Volume.

For Flip Chip that sweet spot was Microprocessors in PCs. For TSV based packages that sweet spot might not be in the PoP which stacks the SoC over DRAM in a Smart Phone ( market already saturating ) as eagerly expected by simple minded Market Analysts basing their forecasts on their imperfect understanding of technology and history.

In case Brian did not mention it, the incremental cost for Packaging ( incl. that for HBM ) in their Fiji ( in your photo ) was 30 % and it got them a performance ( in GFlops, professionally benchmarked ) gain of 50% over their earlier GPU w/ traditionally packaged DRAM. This may not be very enticing for many low enders like the makers of Smart Phone etc. to get interested.

So for TSVs the sweet spot would initially be in bandwidth hungry Cloud Computing, but later perhaps in a really high volume SiP in some as yet undefined IoT system ( however most likely NOT using Area Array TSVs as in Wide I/Os for Memory but just peripheral TSVs as in the 3d stacked BSI image detectors pioneered by SONY ).