Bill Gates starts the introduction of his book, How to Avoid a Climate Disaster, with the header: 51 billion to Zero. This caused me to pause a bit and question the logic of getting to zero, but after doing a significant amount of research and reading a lot of 8×10 glossy Environmental, Social and Governance (ESG) reports, I’m beginning to better understand the drive to zero. It also helped that we just had the snowiest December on record where I live, which was followed by the driest January on record. However, what really got me thinking more about this is a recent announcement by Meta on a new server system they are building for the Metaverse.

Meta’s new AI supercomputer (featured above), the AI Research SuperCluster (RSC), was the result of nearly two years of work involving several hundred people. (Photo Credit: Meta Platforms, Inc., Source Wall Street Journal)

The quote below by Mark Zuckerberg highlights my concerns with how the electronics industry is addressing, or not really addressing emissions and the increase in electricity usage in the industry. And while companies such as Meta can move to renewables, building wind farms and solar farms with battery storage may not be the answer we need to reduce the electronics industry impact on the planet.

“The experiences we’re building for the metaverse require enormous compute power…and RSC will enable new AI models that can learn from trillions of examples, understand hundreds of languages, and more,” Meta CEO Mark Zuckerberg said in a statement provided to The Wall Street Journal.

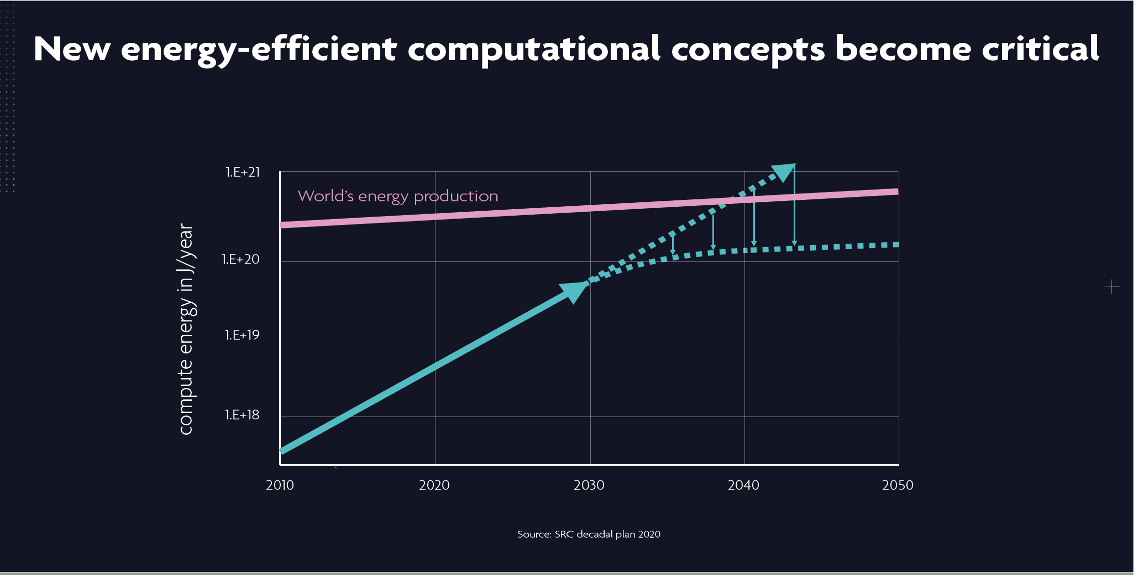

While I fully understand the need for massive computing power to enable new AI models, we as an industry need to find a way to reduce the amount of power needed to run AI. The current models have the compute energy levels rising at a significant rate over the next few years, which is not sustainable, nor healthy for the environment (Figure 1).

The data and energy increases are significant, and the statement I have heard multiple times at conferences is, “Oh if we use renewables to support this it’s ok, as it won’t add to our carbon footprint.”

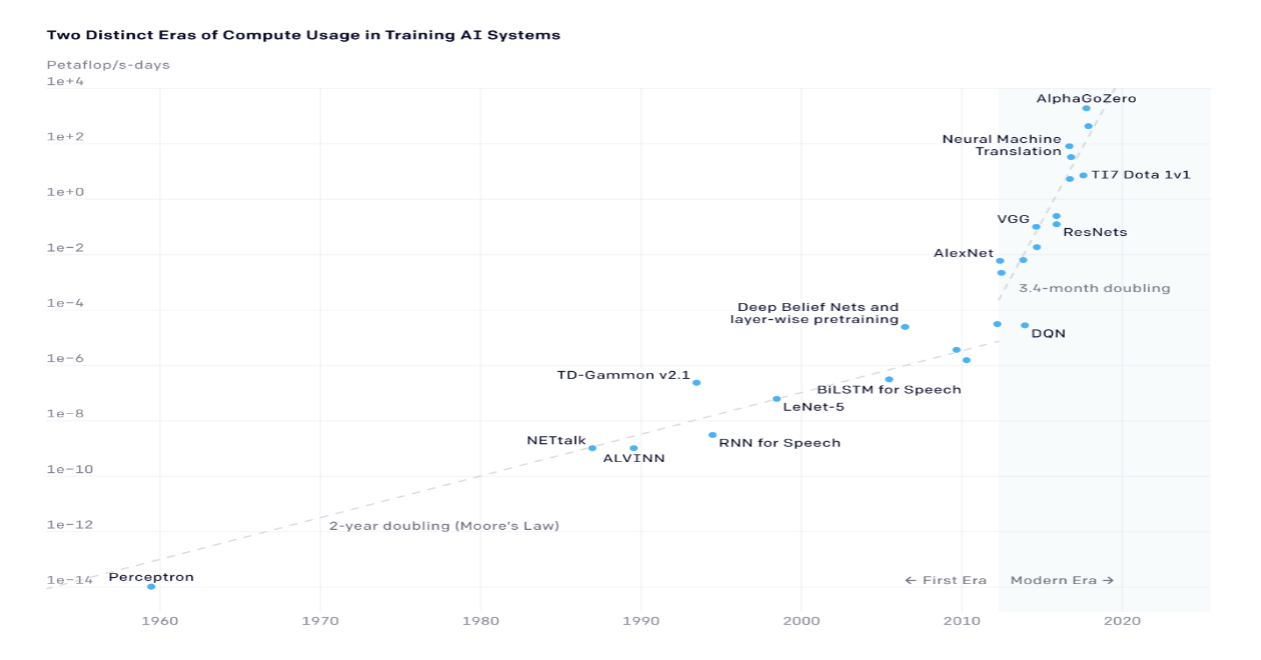

In a study released in April of 2020, by the University of Massachusetts, Amherst, researchers found that the electricity consumed during the training of a transformer, a type of deep-learning algorithm, can emit more than 626,000 pounds of carbon dioxide. The energy amounts generated vary depending upon the requirements of the teach cycle (Figure 2).

In an article for Data Science from Sept 2019, Marin Vlastelica of the Max Planck Institute for Intelligent systems broke the data down a bit further. In 2019 he calculated that a data center uses 52.8 MWh/day, in Dr. Vlastelica’s words a scary number. Vlastelica then broke down the number of windmills you would need to produce the power needed for the roughly 500,000 data centers he believes are operating in the world, approximately 40% of those are in the United States. He estimates that you would need 2.5 million wind turbines to meet demand. At the time of his document, Vlastelica estimated that the United States and Germany had less than 100,000 windmills in operation. As of January 2021, according to the USGS, the United States had increased the number of wind turbines from the 56,000 that Vlastelica cited to 67,000, so an increase of approximately 5,500 turbines per year. I’ll leave it to you to do the math.

While solar is an option there is a similar problem, while Meta, Google, AWS, and Microsoft are all purchasing green energy for their operations, can the solar industry keep up? As noble as using solar sounds, you still have issues with storage, which requires batteries. According to a recent article in Energy Storage News, one solar company announced it can provide power to the city of San Jose for approximately 16 hours a day. This includes daylight generation and a 68MW/275MWh battery storage. To me, this highlights the challenges of using solar for 24×7 sourcing of power for a facility. You need enough batteries to be able to discharge over an 8–12-hour period when the panels are not producing.

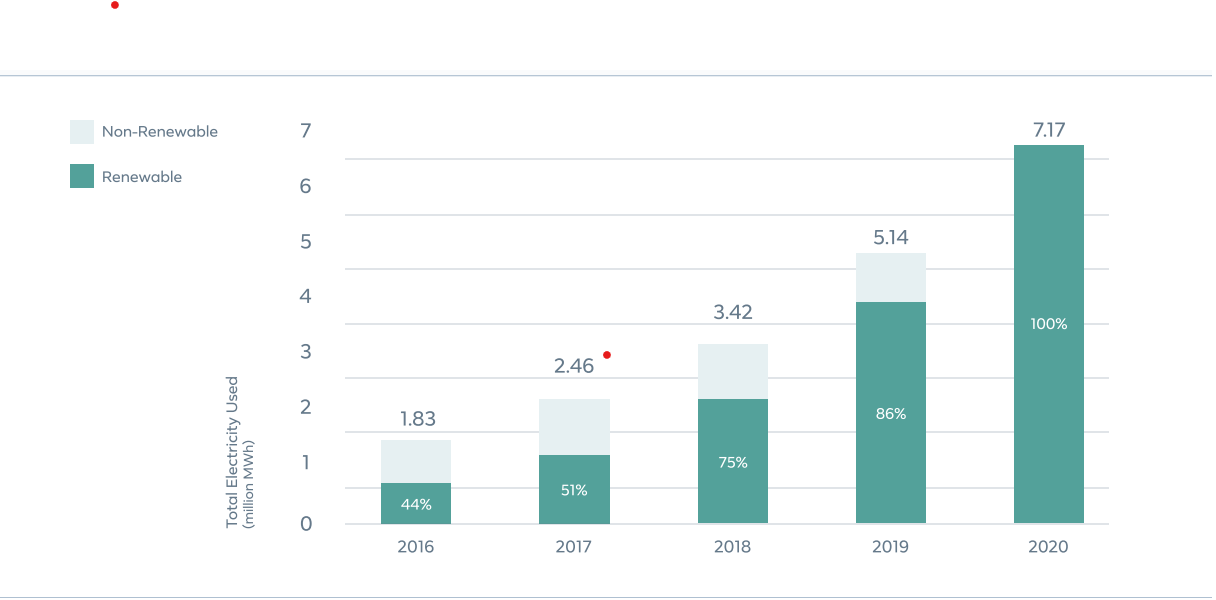

Figure 4 shows META’s electricity increase over the past 5 years, which is nearly 4x. While this is all green, there are other issues associated with this massive increase, such as where is the conservation that should be taking place, instead of doubling consumption every two years.

While I am a big fan of renewables and battery storage to help reduce GHG emissions, there are also challenges associated with the implementation, some of them environmental.

A Tesla Battery pack currently uses approximately 22 pounds of lithium and about 10 pounds of cobalt. Cobalt is currently a key component of Li batteries. Tesla and others are looking to move to other materials such as iron phosphate to replace cobalt. There are many issues with cobalt, one being that there is very little as a natural resource in the United States, and second, it is a conflict mineral, meaning there are strict criteria around sourcing cobalt for all US companies. Hopefully, the new materials will work as well or better than cobalt in the batteries. Lithium is also hard to come by in the United States. Most of the world’s supply comes from Australia or South America, but China controls nearly 97 percent of the refining, making the source of Li a supply chain issue.

There are Li sources in the United States, Thacker pass in Nevada, and a recent find in the Salton Sea in California, but a great deal of controversy surrounds the environmental aspects of mining the mineral. China is also the major backer of the Thacker pass mine, and Li still would go to China for processing

Approval for extracting Li from the Salton Sea has recently been received. While this seems a win-win situation, geothermal power and Li extraction, a US-based processing plant is still needed to complete the supply chain picture.

A side issue of producing a huge number of batteries is collection and recycling, which is off to a good start but will remain dependent upon lithium, and other metal pricing.

What needs to happen?

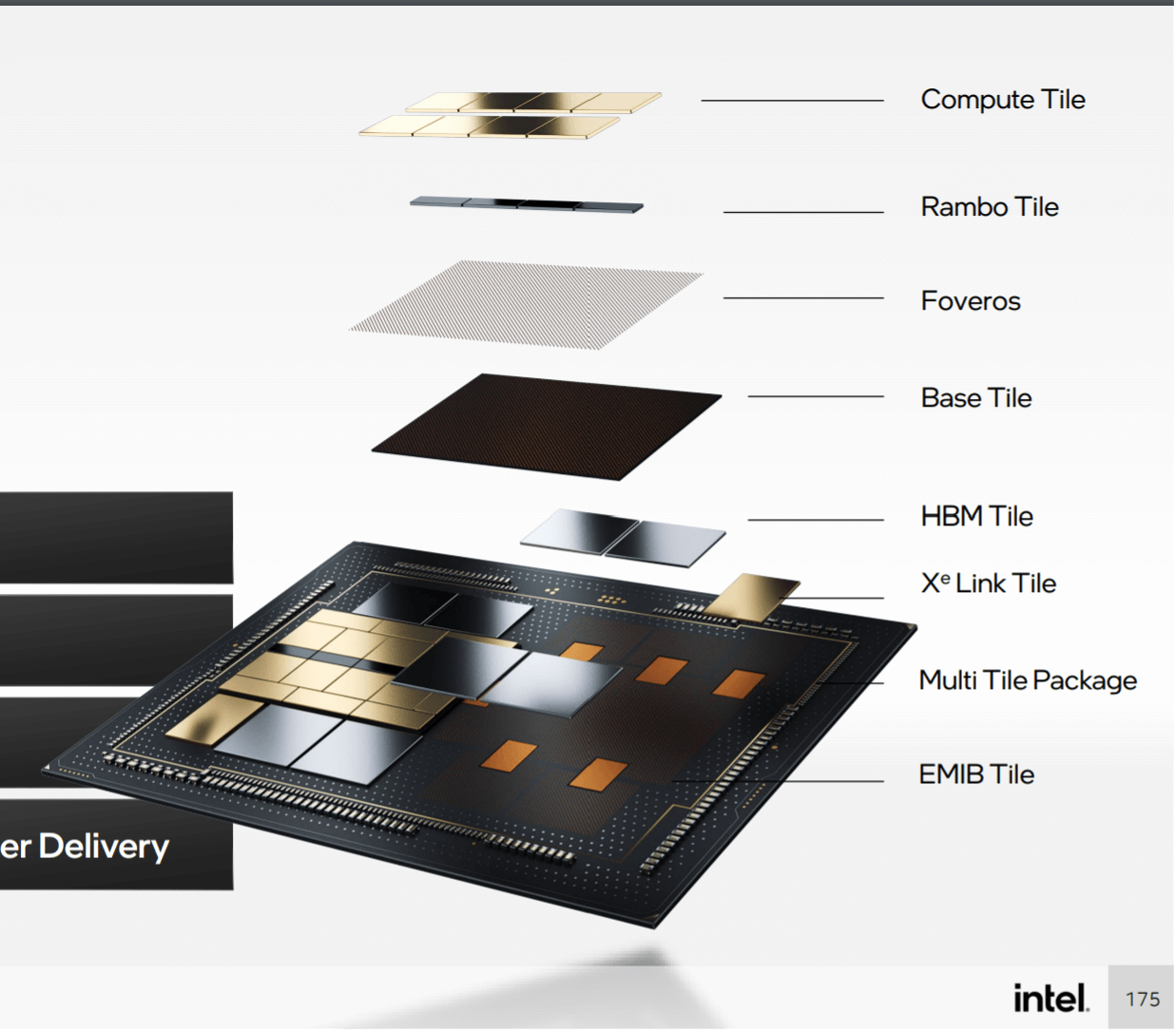

It’s not all doom and gloom. Chip companies such as Intel and Nvidia the key suppliers have committed to making chips more energy-efficient as they move forward. Some of this is the natural progression as transistors get smaller, but packaging technology using chiplets being developed by Intel, TSMC, AMD, and others is enabling companies to reduce the amount of power consumed during compute operations.

Figure 4: Intel Ponte Vecchio demonstrates the implementation of tiles or chiplets in an advanced package. (Source Intel Architecture Day)

Startups such as Cerebras have created chips specifically for AI that reportedly use 1/10 of the power and space of a rack of GPU that provides the equivalent computation power. This not only reduces the compute power needed, but the floor space which would require air conditioning. Companies such as IBM have created supercomputers such as the Satori that are designed to perform energy-efficient AI training so the industry is attempting to address the challenge, but will it be enough.

Much of the industry is in the early stages of figuring out how to execute on sustainability. There are some great goals and aspirations. However, in going green the industry not only needs to focus on renewables, it also needs to focus on how to help reduce consumption, in order to achieve the goal of actually reducing their carbon footprint and going from 51 Billion To Zero.